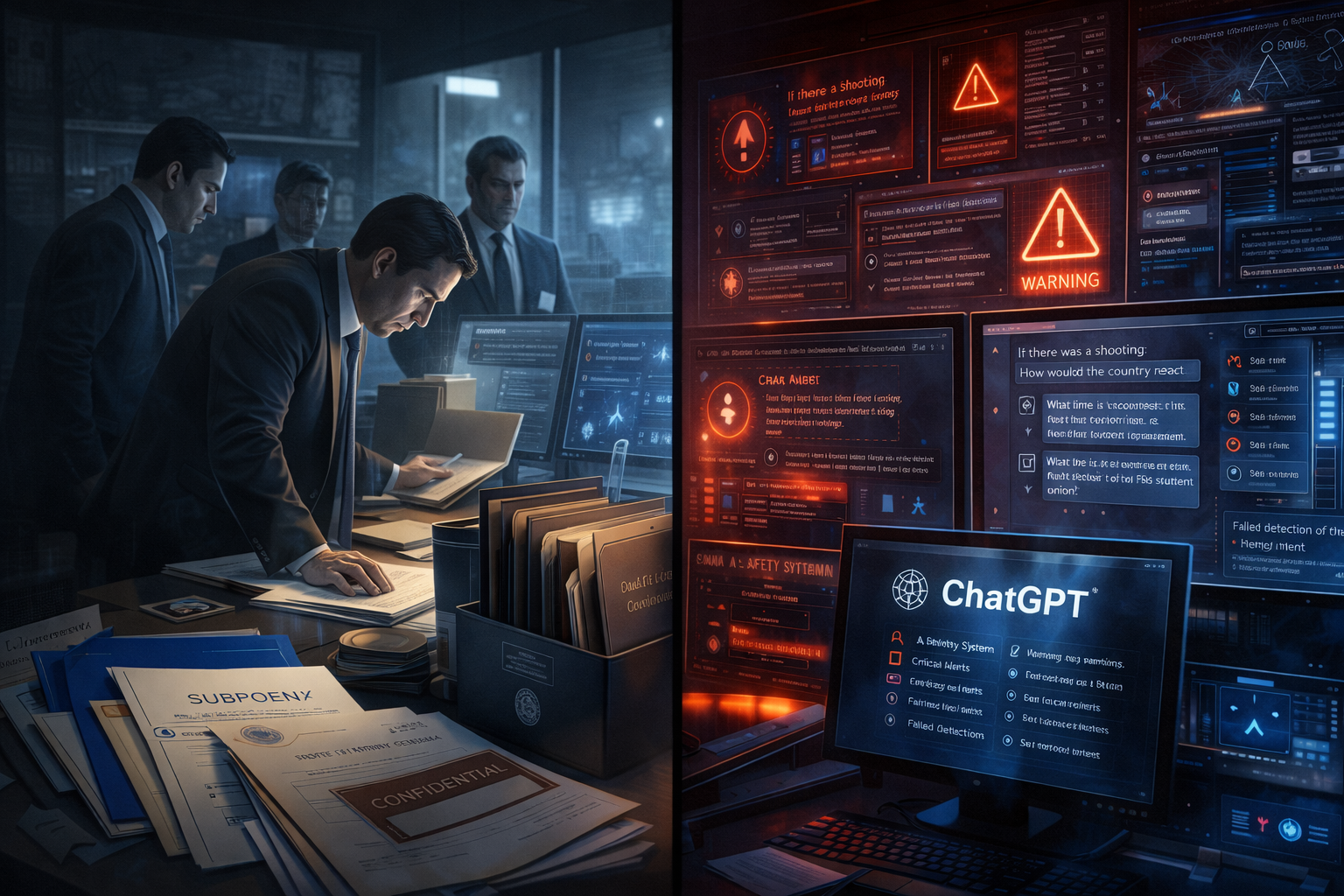

Editorial illustration of Florida’s investigation into OpenAI and ChatGPT over alleged failures to detect harmful intent before the 2025 Florida State University shooting.

Florida Attorney General James Uthmeier has opened a formal investigation into OpenAI and ChatGPT. His office alleges the chatbot helped plan the April 2025 Florida State University mass shooting. The attack killed two people and injured five.

Court filings reveal that the accused shooter entered more than 200 prompts into ChatGPT before the attack. Attorneys for the wife of victim Robert Morales plan to sue OpenAI over those prompts. Uthmeier stated: “AI should advance mankind, not destroy it. We’re demanding answers on OpenAI’s activities that have hurt kids, endangered Americans, and facilitated the recent FSU mass shooting. Wrongdoers must be held accountable.” He confirmed that subpoenas are forthcoming.

Why It Matters

This is the most consequential state-level law enforcement action against a major AI company to date. It arrives with specific allegations – not general safety concerns. The suspect asked ChatGPT direct questions before the shooting. He inquired about public reaction to a campus attack. He also asked when the FSU student union was busiest. Those prompts shift the legal argument from theoretical harm into documented pre-attack planning.

The investigation hits OpenAI at a difficult moment. A company spokesperson confirmed OpenAI will cooperate. The company noted that more than 900 million people use ChatGPT each week. For the AI Ethics sector, this probe raises a harder question. It asks whether AI companies bear liability not for content they produce, but for failing to flag harmful intent across long conversation histories.

Florida Congressman Jimmy Patronis sees the case as evidence for the SHIELD Act. That legislation would repeal Section 230 of the Communications Decency Act. Section 230 currently shields online platforms from liability for third-party content. If courts strip that protection from AI companies, the industry faces structural liability exposure – not just case-by-case risk.

What’s Next

Subpoenas will require OpenAI to hand over internal safety flagging data from the shooter’s conversation history. That is a level of transparency the company has never faced in a law enforcement context. The disclosure will either validate OpenAI’s safety systems or expose a failure to detect explicit planning signals across 200+ messages.

The Morales family civil lawsuit runs on a separate track. It may reach discovery before the AG investigation concludes. Together, the two legal processes create compounding pressure on OpenAI’s safety and compliance teams. That pressure arrives precisely as the company prepares for a potential IPO. Furthermore, Florida is unlikely to act alone. Other states are watching this template closely.

Sources: NBC News · TechCrunch · WFSU News