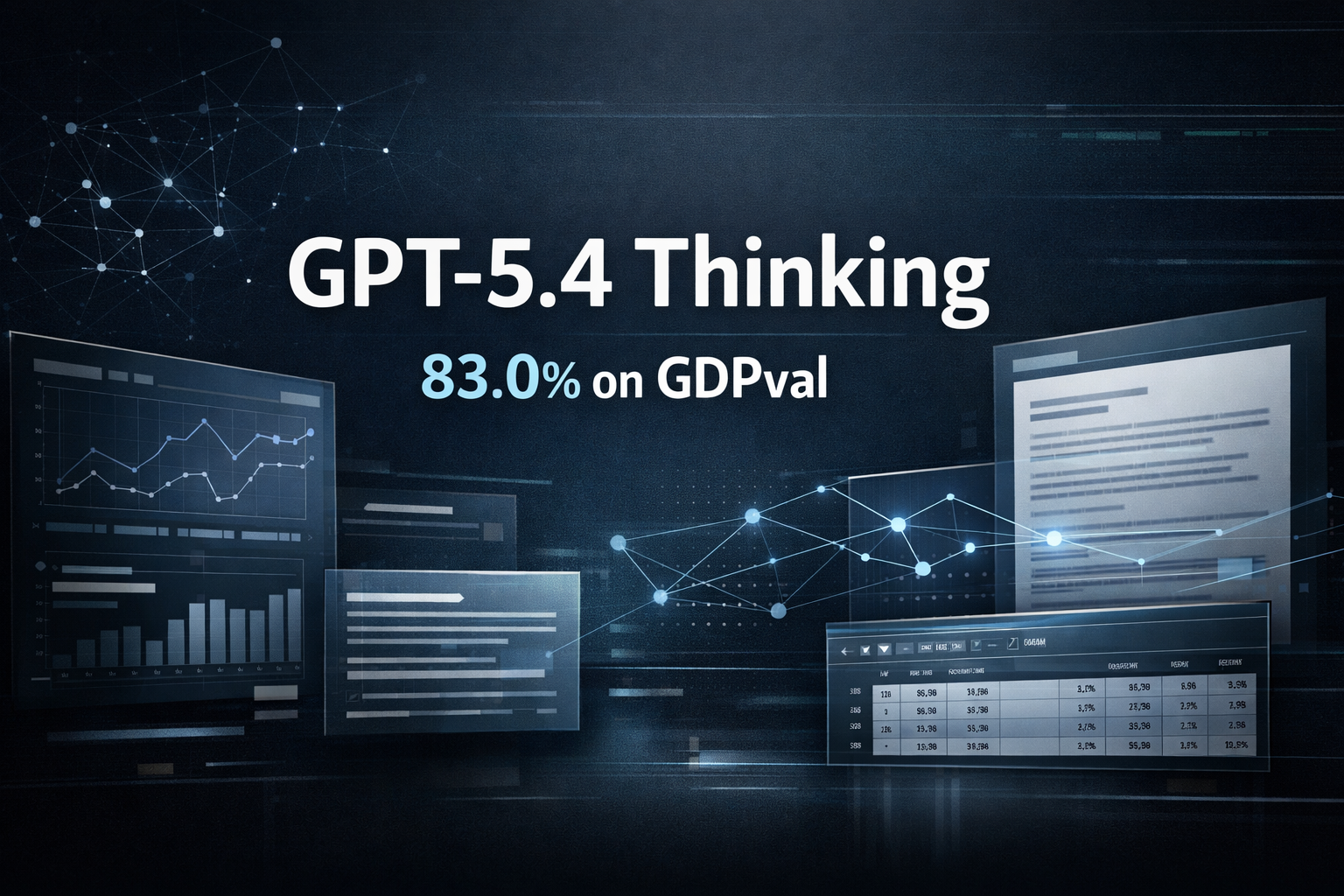

OpenAI says GPT-5.4 Thinking reached 83.0% on the GDPval benchmark.

OpenAI says its newly released GPT-5.4 has reached 83.0% on GDPval, a benchmark designed to evaluate how well AI systems perform economically valuable, real-world professional tasks. According to the company, GPT-5.4 is available in ChatGPT as GPT-5.4 Thinking and is positioned as its most capable model for professional work to date.

What GDPval measures

GDPval is an OpenAI evaluation framework focused on realistic knowledge-work tasks rather than trivia-style benchmark questions. OpenAI says the benchmark spans 44 occupations across 9 major industries contributing to U.S. GDP, giving a clearer view of how models perform on practical work with economic value.

In OpenAI’s published results, GPT-5.4 posted 83.0% on GDPval, up from 70.9% for GPT-5.2. The same release also highlights gains in coding, computer use, tool use, and other professional evaluations, suggesting that the model’s improvements are not limited to a single benchmark.

Why the result matters

The GDPval score is notable because it shifts the discussion away from academic test-taking and toward task completion in professional settings. OpenAI frames GPT-5.4 as a model built for reasoning, coding, agentic workflows, and professional work involving spreadsheets, presentations, and documents.

That matters for companies evaluating generative AI for internal workflows. Instead of asking whether a model is good at general Q&A, enterprise buyers are increasingly asking whether it can produce useful outputs in areas such as research, analysis, operations, and document-heavy work. GDPval is more closely aligned with that question than many traditional AI benchmarks.

What OpenAI is claiming – and what it is not

OpenAI’s published materials support the claim that GPT-5.4 represents a meaningful step forward for professional tasks. The company describes it as more capable in long-context reasoning, tool-heavy workflows, and knowledge work, while also emphasizing better token efficiency compared with GPT-5.2.

At the same time, the benchmark result should be read carefully. A strong GDPval score does not mean the model can replace experts across every high-stakes domain without supervision. It means that, in OpenAI’s evaluation setup, GPT-5.4 performed at a very high level on a large set of realistic professional tasks. That is an important distinction for businesses, regulators, and readers trying to interpret the announcement accurately.

Market context

The result arrives during a period of intense competition in frontier AI, with major labs racing to improve reasoning, coding, tool use, and real-world task execution. OpenAI’s launch materials position GPT-5.4 as a broader professional model rather than a narrow benchmark specialist, with rollout across ChatGPT, the API, and Codex.

For enterprise teams, the bigger takeaway may be practical rather than symbolic: models are being evaluated less on abstract intelligence and more on whether they can produce useful work products inside real workflows. On that front, GPT-5.4’s GDPval result is a meaningful signal.

Bottom line

GPT-5.4’s 83.0% GDPval score is one of the clearest signs yet that frontier AI models are improving on tasks that resemble real professional work. The headline is strong on its own. It does not need overstatement. The more important story is that benchmark design is moving closer to the economic tasks businesses actually care about – and AI model development is moving with it.